A Story of Basis and Kernel - Part I: Function Basis

1. Review of Basis Concept

We know that everything in the world can be decomposed into the combination of the basic elements. For example, water is the combination of hydrogen and oxygen. Similarly, in mathematics, basis is used to represent various things in a simple and unified way.

In space, we can use independent vectors to represent any vector by linear combination. The independent vectors can be viewed as a set of basis. There are infinite basis sets in space. Among them, basis vectors that are orthogonal to each other are of special interests. For example, is a special basis set with mutually orthogonal basis vectors in the same length, where is a vector that has all zero entries except the th entry which equals 1.

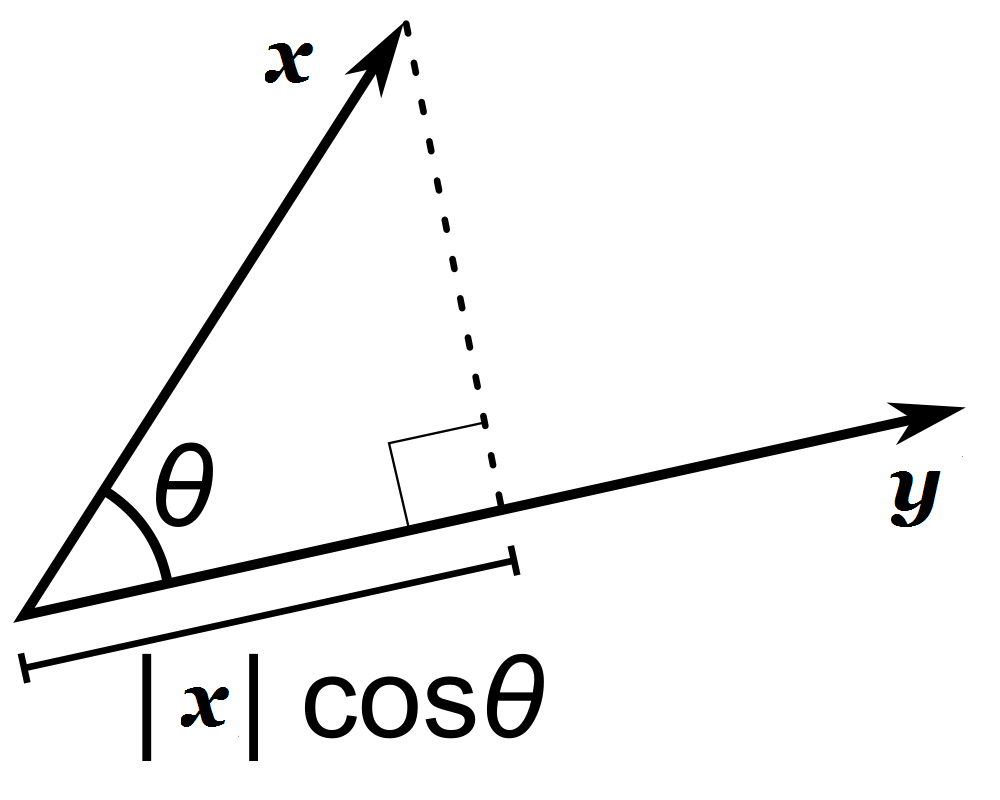

The inner product operator measures the similarity between vectors. For two vectors and , the inner product is the projection of one vector to the other.

If and , we can get

Until now it is a review of vector basis. These knowledge can also be extended to functions and function space.

2. Function Basis

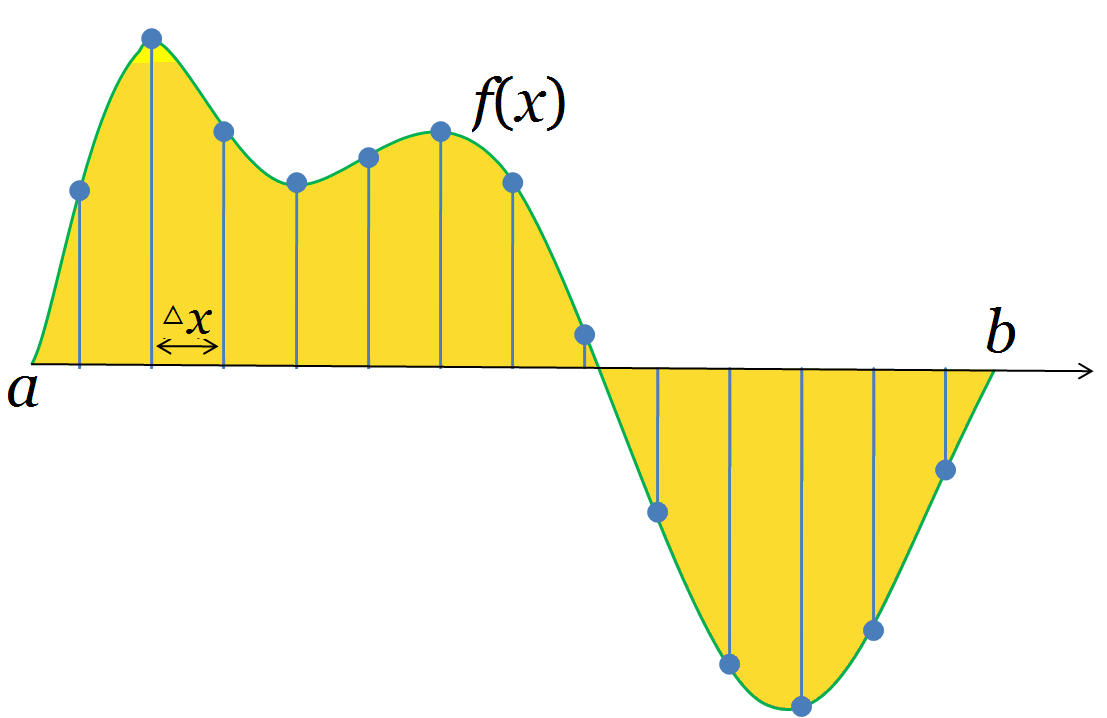

A function is an infinite vector. As the following figure shows

For a function defined on the interval , we take samples by an interval . If we sample the function at points , then we can transform the function into a vector . When , the vector should be more and more close to the function and at last, it becomes infinite.

The above analysis assumes to be a real number. But when is a vector, it still holds. In this article, we use bold font such as to denote a vector in space; use to denote the function itself, namely the infinite vector; use to denote the evaluation of the function at point . And the evaluation of a function should be a real number.

Since functions are so close to vectors, we can also define the inner product of functions similarly. For two functions and sampling by interval , the inner product may be defined as

For a vector, the dimension is discrete. We only have the first, second,… dimension. But we don't have the 0.5, 1.5,… dimension. However, the dimension is not discrete for functions, but continuous. Thus we use the difference between adjacent dimensions (i.e., ) for normalization.

The expression of function inner product is seen everywhere. It has various meanings in various context. For example, if is a continuous random variable with probability density function , i.e., and , then the expectation

Similar to vector basis, we can use a set of functions to represent other functions. The difference is that in a vector space, we only need finite vectors to construct a complete basis set, but in function space, we may need infinite basis functions. Two functions can be regarded as orthogonal if their inner product is zero. In function space, we can also have a set of function basis that are mutually orthogonal.

3. Example: Fourier Series

Let the basis functions is integer) be

defined on interval . Here is the imaginary number. These functions construct a function space and any function defined on interval can be represented as linear combination of the basis functions. We can prove that any two basis functions are orthogonal (for complex numbers, the latter term should take conjugate transpose when calculating the inner product).

where and . The "length" of basis is

If a function defined on interval is within the space, it can be written as

Since

the coefficient can be calculated as

which is the Fourier series.

4. Example: Wavelet Analysis

Define function on as

Define a series of functions for every pair of intergers

That is, we rescaled by and shift by . We can show that

Any function can be represented as the linear combination of . By the same technique as the former example, we can also obtain the analytical expression of the coefficient for each basis function, which is the Haar wavelet analysis.

In the next part, fundermentals about kernel functions and kernel method will be discussed.